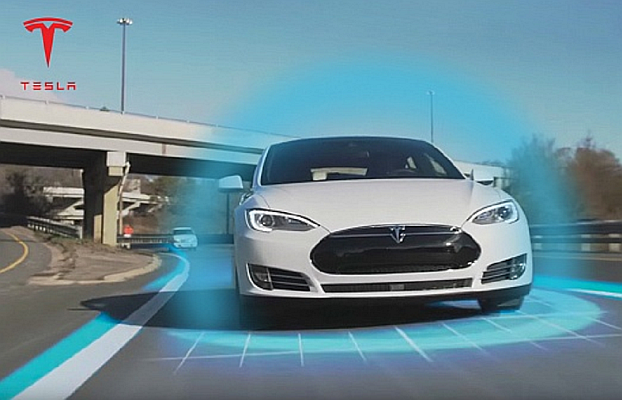

Some of the Tesla owners recently found themselves confused by a strange glitch in the Model 3’s Autopilot system: The car kept slamming the brakes in the middle of the same stretch of road.

Eventually, they figured it out: The car was registering a giant stop sign printed on a nearby billboard as a real traffic sign, and therefore deciding that the right course of action was the come to a halt in the middle of the road.

Tesla with Autopilot enabled would spots the sign and so would drop from 35 miles per hour to a full stop in the middle of the road.

The owners remains optimistic that Tesla will figure it out a way and say that it will just take more training to figure out edge cases like this before self-driving technology is truly ready.

But while each individual edge case may be a unique, small-scale autopilot glitch, the totality of unusual scenarios a car might encounter represents a whole world of chaos that’s too complex for even the most sophisticated algorithms to currently detangle.

It’s a comical glitch, to be sure, but the edge case also illustrates the myriad ways that self-driving (autopilot) car software continues to make mistakes — which may end up with deadly results.

Reference- Jalopnik, Twitter, Youtube, Futurism, EV Obsession